An Apple patent (number 20120268410) for generating 3D objects based on 2D objects has appeared at the U.S. Patent & Trademark Office. Could this involve a 3D printer -- or, at least, 3D printer output?

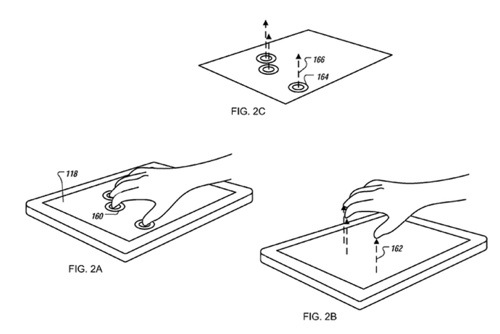

Per the patent, a first user input identifying a 2D object presented in a user interface can be detected, and a second user input including a 3D gesture input that includes a movement in proximity to a surface can be detected. A 3D object can be generated based on the 2D object according to the first and second user inputs, and the 3D object can be presented in the user interface.

Here's Apple's background and summary of the invention: "Computer assisted design (CAD) software allows users to generate and manipulate two-dimensional (2D) and three-dimensional (3D) objects. A user can interact with a CAD program using various peripheral input devices, such as a keyboard, a computer mouse, a trackball, a touchpad, a touch-sensitive pad, and/or a touch-sensitive display. The CAD program may provide various software tools for generating and manipulating 2D and 3D objects.

"The CAD program may provide a drafting area showing 2D or 3D objects being processed by the user, and menus outside the drafting area for allowing the user to choose from various tools in generating or modifying 2D or 3D objects. For example, there may be menus for 2D object templates, 3D object templates, paint brush options, eraser options, line options, color options, texture options, options for rotating or resizing the objects, and so forth. The user may select a tool from one of the menus and use the selected tool to manipulate the 2D or 3D object.

"Techniques and systems that support generating, modifying, and manipulating 3D objects using 3D gesture inputs are disclosed. For example, 3D objects can be generated based on 2D objects. A first user input identifying a 2D object presented in a user interface can be detected, and a second 3D gesture input that includes a movement in proximity to a surface can be detected. A 3D object can be generated based on the 2D object according to the first and second user inputs, and the 3D object can be presented in the user interface where the 3D object can be manipulated by the user.

"Three-dimensional objects can be modified using 3D gesture inputs. For example, a 3D object shown on a touch-sensitive display can be detected, and a 3D gesture input that includes a movement of a finger or a pointing device in proximity to a surface of the touch-sensitive display can be detected. Detecting the 3D gesture input can include measuring a distance between the finger or the pointing device and a surface of the display. The 3D object can be modified according to the 3D gesture input, and the updated 3D object can be shown on the touch-sensitive display.

"For example, a first user input that includes at least one of a touch input or a two-dimensional (2D) gesture input can be detected, and a 3D gesture input that includes a movement in proximity to a surface can be detected. A 3D object can be generated in a user interface based on the 3D gesture input and at least one of the touch input or 2D gesture input.

"An apparatus for generating or modifying 3D objects can include a touch sensor to detect touch inputs and 2D gesture inputs that are associated with a surface, and a proximity sensor in combination with the touch sensor to detect 3D gesture inputs, each 3D gesture input including a movement in proximity to the surface. A data processor is provided to receive signals output from the touch sensor and the proximity sensor, the signals representing detected 3D gesture inputs and at least one of detected touch inputs or detected 2D gesture inputs. The data processor generates or modifies a 3D object in a user interface according to the detected 3D gesture inputs and at least one of detected touch inputs or detected 2D gesture inputs.

"An apparatus for generating or modifying 3D objects can include a sensor to detect touch inputs, 2D gesture inputs that are associated with a surface, and 3D gesture inputs that include a movement perpendicular to the surface. A data processor is provided to receive signals output from the sensor, the signals representing detected 3D gesture inputs and at least one of detected touch inputs or detected 2D gesture inputs. The data processor generates or modifies a 3D object in a user interface according to the detected 3D gesture inputs and at least one of detected touch inputs or detected 2D gesture inputs."

The inventors are Nicholas V. King and Todd Benjamin.